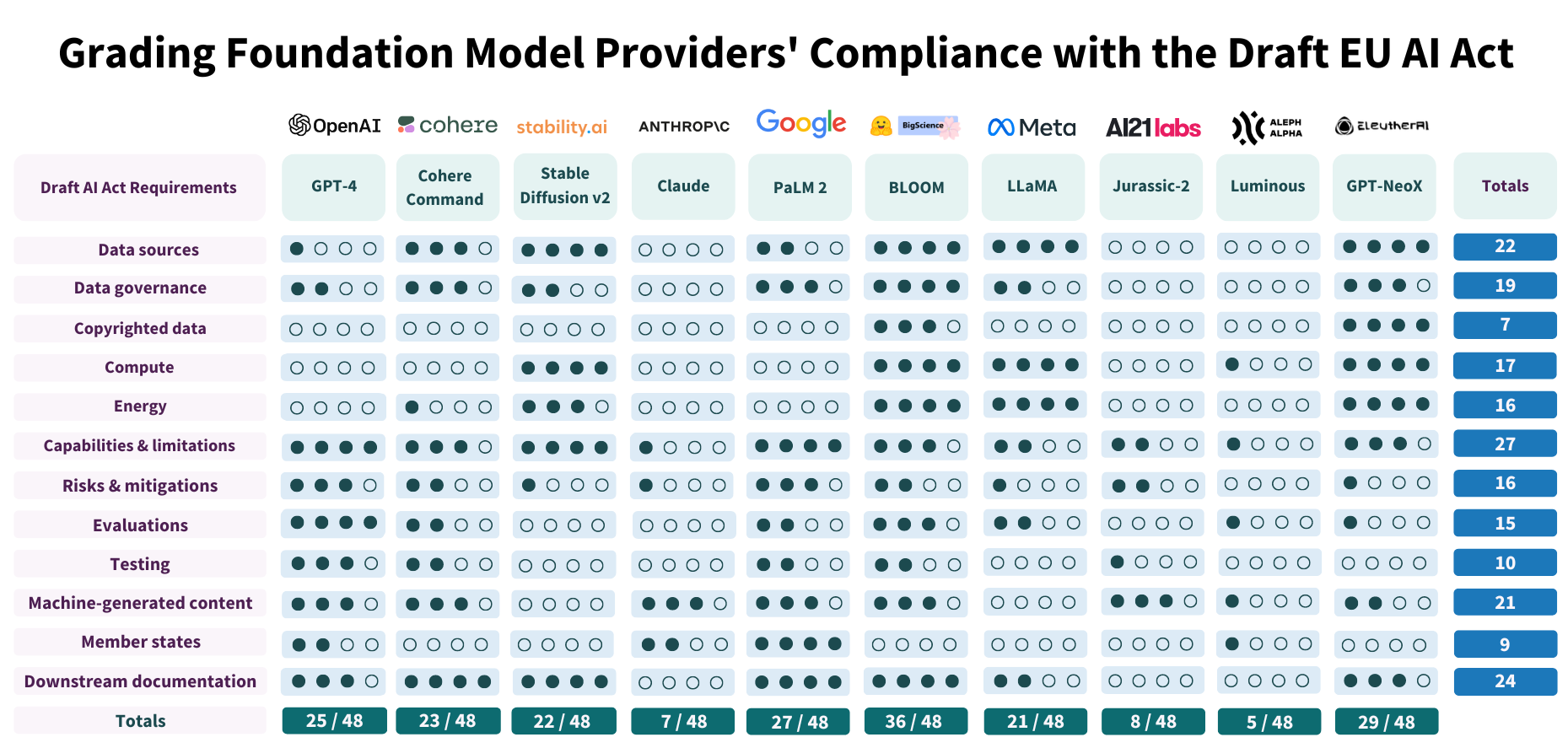

Stanford’s researchers have done a great job evaluating some foundation models against their compliance with proposed EU law on AI.

The results are:

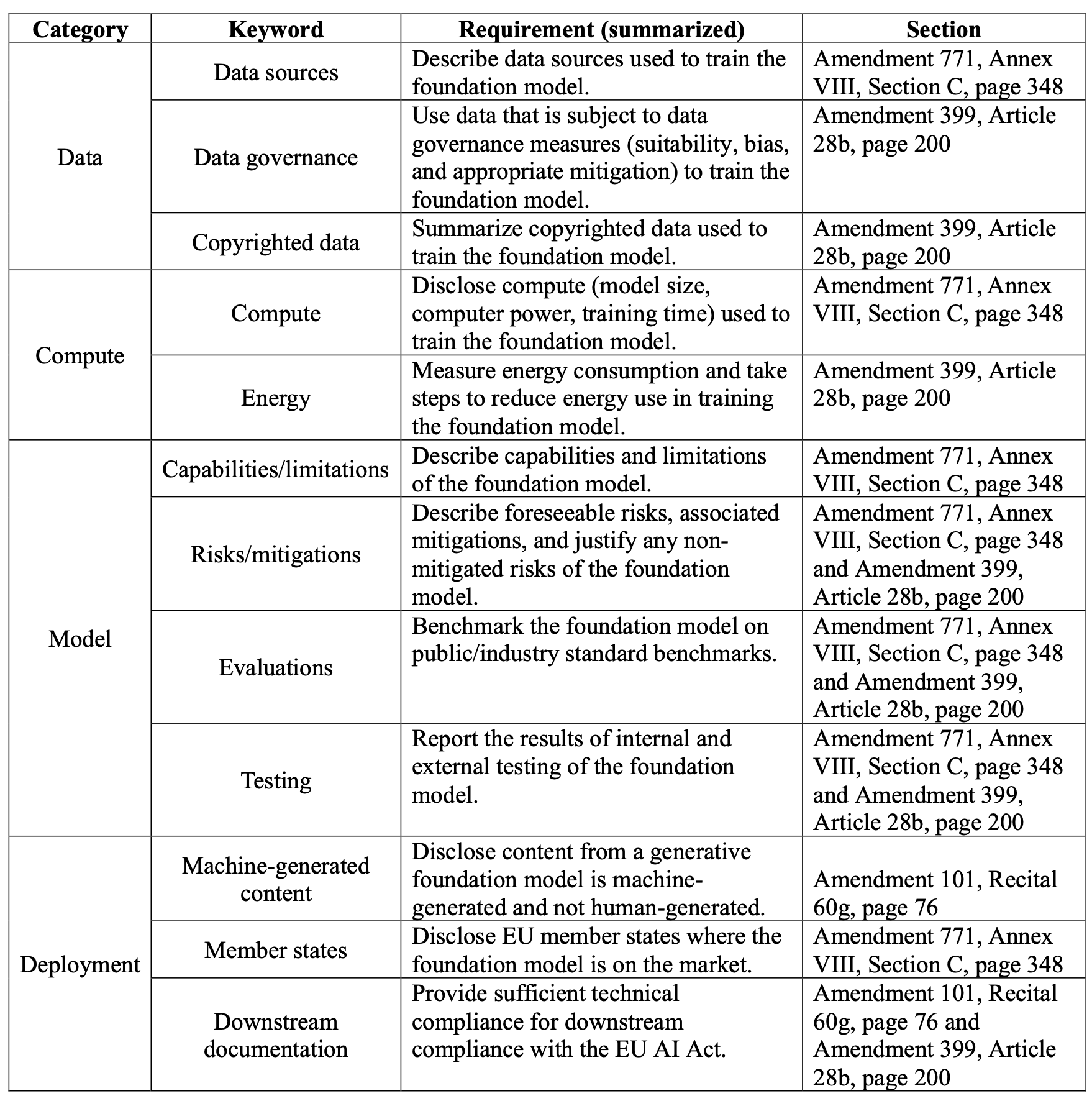

Rubrics used and short description of the 12 requirements taken into account:

1. Data sources (additive)

- \+1: Very generic/vague description (e.g. “Internet data”)

- \+1: Description of stages involved (e.g. training, instruction-tuning)

- \+1: Sizes (relative or absolute) of different data sources

- \+1: Fine-grained sourcing (e.g. specific URLs like Wikipedia, Reddit)

2. Data governance

- 0 points: No discussion of data governance

- 1 point: Vague mention of governance with no concreteness

- 2-3 points: Some grounded discussion or specific protocols around governance related to suitability and/or bias of data sources

- 4 points: Explicit constraint on requiring governance measures to include data

3. Copyrighted data

- 0 points: No description.

- 1 point: Very generic/vague acknowledgement of copyright (e.g. tied to “Internet data”)

- 2-3 points: Some grounded discussion of specific copyrighted materials

- 4 points: Fine-grained separation of copyrighted vs. non-copyrighted data

4. Compute (additive)

- \+1: model size

- \+1: training time as well as number and type of hardware units (e.g. number of A100s)

- \+1: training FLOPs

- \+1: Broader context (e.g. compute provider, how FLOPs are measured)

5. Energy (additive)

- \+1: energy usage

- \+1: emissions

- \+1: discussion of measurement strategy (e.g. cluster location and related details)

- \+1: discussion of mitigations to reduce energy usage/emissions

6. Capabilities and limitations

- 0 points: No description.

- 1 point: Very generic/vague description

- 2-3 points: Some grounded discussion of specific capabilities and limitations

- 4 points: Fine-grained discussion grounded in evaluations/specific examples

7. Risks and mitigations (additive)

- \+1: list of risks

- \+1: list of mitigations

- \+1: description of the extent to which mitigations successfully reduce risk

- \+1: justification for why non-mitigated risks cannot be mitigated

8. Evaluations (additive)

- \+1: measurement of accuracy on multiple benchmarks

- \+1: measurement of unintentional harms (e.g. bias)

- \+1: measurement of intentional harms (e.g. malicious use)

- \+1: measurement of other factors (e.g. robustness, calibration, user experience)

9. Testing (additive)

- \+1 or +2: disclosure of results and process of (significant) internal testing

- \+1: external evaluations due to external access (e.g. HELM)

- \+1: external red-teaming or adversarial evaluation/stress-testing (e.g. ARC)

10. Machine-generated content

- \+ 1-3 points: Disclosure that content is machine-generated within direct purview of the foundation model provider (e.g when using OpenAI API).

- \+1 point: Disclosed mechanism to ensure content is identifiable as machine-generated even beyond direct purview of foundation model provider (e.g. watermarking).

11. Member states

- 0 points: No description of deployment practices in relation to the EU.

- 2 points: Disclosure of explicitly permitted/prohibited EU member states at the organization operation level.

- 4 points: Fine-grained discussion of practice per state, including any discrepancies in how the foundation model is placed on the market or put into service that differ across EU member states.

12. Downstream documentation

- 0 points: No description of any informational obligations or documentation.

- 1 point: Generic acknowledgement that information should be provided downstream.

- 2 points: Existence of relevant documentation, including in public reports, though mechanism for supplying to downstream developers is unclear.

- 3-4 points: (Fairly) clear mechanism for ensuring foundation model provider provides appropriate documentation to downstream providers.

Full list of requirements found by the researchers in AI Act draft:

- Registry [Article 39, item 69, page 8 as well as Article 28b, paragraph 2g, page 40]. In order to facilitate the work of the Commission and the Member States in the artificial intelligence field as well as to increase the transparency towards the public, providers of high-risk AI systems other than those related to products falling within the scope of relevant existing Union harmonization legislation, should be required to register their high-risk AI system and foundation models in a EU database, to be established and managed by the Commission. This database should be freely and publicly accessible, easily understandable and machine-readable. The database should also be user friendly and easily navigable, with search functionalities at minimum allowing the general public to search the database for specific high-risk systems, locations, categories of risk under Annex IV and keywords. Deployers who are public authorities or European Union institutions, bodies, offices and agencies or deployers

- Provider name [Annex VIII, Section C, page 24]. Name, address and contact details of the provider.

- Model name [Annex VIII, Section C, page 24]. Trade name and any additional unambiguous reference allowing the identification of the foundation model.

- Data sources [Annex VIII, Section C, page 24]. Description of the data sources used in the development of the foundation model.

- Capabilities and limitations [Annex VIII, Section C, page 24]. Description of the capabilities and limitations of the foundation model.

- Risks and mitigations [Annex VIII, Section C, page 24 and Article 28b, paragraph 2a, page 39]. The reasonably foreseeable risks and the measures that have been taken to mitigate them as well as remaining non-mitigated risks with an explanation on the reason why they cannot be mitigated.

- Compute [Annex VIII, Section C, page 24]. Description of the training resources used by the foundation model including computing power required, training time, and other relevant information related to the size and power of the model.

- Evaluations [Annex VIII, Section C, page 24 as well as Article 28b, paragraph 2c, page 39]. Description of the model’s performance, including on public benchmarks or state of the art industry benchmarks.

- Testing [Annex VIII, Section C, page 24 as well as Article 28b, paragraph 2c, page 39]. Description of the results of relevant internal and external testing and optimisation of the model.

- Member states [Annex VIII, Section C, page 24]. Member States in which the foundation model is or has been placed on the market, put into service or made available in the Union.

- Downstream documentation [Annex VIII, 60g, page 29 as well as Article 28b, paragraph 2e, page 40]. Also, foundation models should have information obligations and prepare all necessary technical documentation for potential downstream providers to be able to comply with their obligations under this Regulation.

- Machine-generated content [Annex VIII, 60g, page 29]. Generative foundation models should ensure transparency about the fact the content is generated by an AI system, not by humans.

- Pre-market compliance [Article 28b, paragraph 1, page 39]. A provider of a foundation model shall, prior to making it available on the market or putting it into service, ensure that it is compliant with the requirements set out in this Article, regardless of whether it is provided as a standalone model or embedded in an AI system or a product, or provided under free and open source licenses, as a service, as well as other distribution channels.

- Data governance [Article 28b, paragraph 2b, page 39]. Process and incorporate only datasets that are subject to appropriate data governance measures for foundation models, in particular measures to examine the suitability of the data sources and possible biases and appropriate mitigation.

- Energy [Article 28b, paragraph 2d, page 40]. Design and develop the foundation model, making use of applicable standards to reduce energy use, resource use and waste, as well as to increase energy efficiency, and the overall efficiency of the system. This shall be without prejudice to relevant existing Union and national law and this obligation shall not apply before the standards referred to in Article 40 are published. They shall be designed with capabilities enabling the measurement and logging of the consumption of energy and resources, and, where technically feasible, other environmental impact the deployment and use of the systems may have over their entire lifecycle.

- Quality management [Article 28b, paragraph 2f, page 40]. Establish a quality management system to ensure and document compliance with this Article, with the possibility to experiment in fulfilling this requirement.

- Upkeep [Article 28b, paragraph 3, page 40]. Providers of foundation models shall, for a period ending 10 years after their foundation models have been placed on the market or put into service, keep the technical documentation referred to in paragraph 1(c) at the disposal of the national competent authorities.

- Law-abiding generated content [Article 28b, paragraph 4b, page 40]. Train, and where applicable, design and develop the foundation model in such a way as to ensure adequate safeguards against the generation of content in breach of Union law in line with the generally acknowledged state of the art, and without prejudice to fundamental rights, including the freedom of expression.

- Training on copyrighted data [Article 28b, paragraph 4c, page 40]. Without prejudice to national or Union legislation on copyright, document and make publicly available a sufficiently detailed summary of the use of training data protected under copyright law.

- Adherence to general principles [Article 4a, paragraph 1, page 142-3]. All operators falling under this Regulation shall make their best efforts to develop and use AI systems or foundation models in accordance with the following general principles establishing a high-level framework that promotes a coherent humancentric European approach to ethical and trustworthy Artificial Intelligence, which is fully in line with the Charter as well as the values on which the Union is founded: a) ‘human agency and oversight’ means that AI systems shall be developed and used as a tool that serves people, respects human dignity and personal autonomy, and that is functioning in a way that can be appropriately controlled and overseen by humans. b) ‘technical robustness and safety’ means that AI systems shall be developed and used in a way to minimize unintended and unexpected harm as well as being robust in case of unintended problems and being resilient against attempts to alter the use or performance of the AI system so as to allow unlawful use by malicious third parties. c) ‘privacy and data governance’ means that AI systems shall be developed and used in compliance with existing privacy and data protection rules, while processing data that meets high standards in terms of quality and integrity. d) ‘transparency’ means that AI systems shall be developed and used in a way that allows appropriate traceability and explainability, while making humans aware that they communicate or interact with an AI system as well as duly informing users of the capabilities and limitations of that AI system and affected persons about their rights. e) ‘diversity, non-discrimination and fairness’ means that AI systems shall be developed and used in a way that includes diverse actors and promotes equal access, gender equality and cultural diversity, while avoiding discriminatory impacts and unfair biases that are prohibited by Union or national law. f) ‘social and environmental well-being’ means that AI systems shall be developed and used in a sustainable and environmentally friendly manner as well as in a way to benefit all human beings, while monitoring and assessing the long-term impacts on the individual, society and democracy. For foundation models, the general principles are translated into and complied with by providers by means of the requirements set out in Articles 28 to 28b.

- System is designed so users know its an AI [Article 52(1) Paragraph 1 - not in the Compromise text, but invoked in 28(b), paragraph 4a, page 40]. Providers shall ensure that AI systems intended to interact with natural persons are designed and developed in such a way that natural persons are informed that they are interacting with an AI system, unless this is obvious from the circumstances and the context of use. This obligation shall not apply to AI systems authorised by law to detect, prevent, investigate and prosecute criminal offences, unless those systems are available for the public to report a criminal offence.

- Appropriate levels [Article 28b, paragraph 2c, page 39]. design and develop the foundation model in order to achieve throughout its lifecycle appropriate levels of performance, predictability, interpretability, corrigibility, safety and cybersecurity assessed through appropriate methods such as model evaluation with the involvement of independent experts, documented analysis, and extensive testing during conceptualisation, design, and development